- calendar_today August 21, 2025

Mobile technology’s direction is experiencing an intensive transformation because of the fast-paced growth of generative artificial intelligence technologies. Sophisticated AI features currently depend on powerful remote server capabilities, but Google aims to embed these advanced capabilities directly into smartphones. The tech world eagerly awaits the Google I/O event because it will likely reveal a new developer API set designed to maximize the capabilities of their Gemini Nano model for local AI processing. The strategic decision highlights Google’s dedication to delivering advanced AI features to users directly on their devices while simultaneously enhancing data security and application efficiency through reduced cloud dependency.

Unlocking Local AI Potential

Google’s developer documentation, made available to the public, reveals important details about upcoming AI improvements for Android devices. Android Authority investigative reports reveal that an upcoming ML Kit SDK update will deliver extensive API support for on-device generative AI capabilities using the Gemini Nano model. The innovative framework builds on Google’s powerful AI Core, which shares foundational similarities with the experimental Edge AI SDK but stands apart through its deep integration and focus on user experience. The integration with a pre-existing model and provision of clear functionalities aims to simplify implementation, which makes advanced AI capabilities more accessible to mobile application developers who want to improve their applications.

Key Features Coming to Mobile

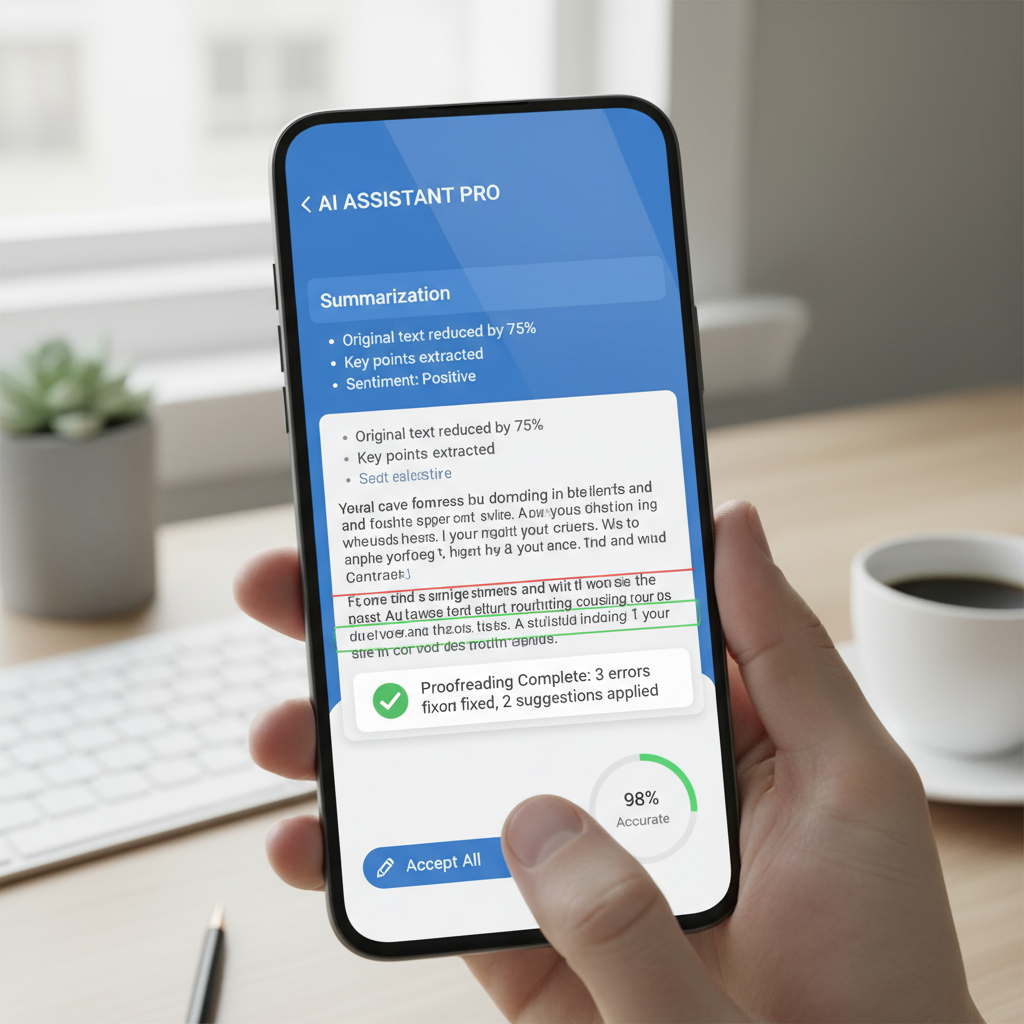

The new ML Kit GenAI APIs documentation from Google provides thorough details on core functionalities that enable applications to perform tasks directly on the device, thus transforming the requirement for constant cloud-based user data processing. Core functionality includes condensing extensive text into summaries while offering automated correction suggestions for grammar and spelling mistakes, providing alternative sentence structures for improved writing quality and impact, as well as generating textual representations to accurately describe the visuals in digital images.

The fundamental physical and processing limitations of mobile devices require that specific restrictions be placed on the operational parameters when running the Gemini Nano model on these devices. The generated text summaries will have a strict algorithmic limit of three bullet points, and image description features will initially support only English language users. The quality and subtlety of AI-generated outputs demonstrate slight differences based on which version of the Gemini Nano model runs on a specific smartphone hardware setup. The standard Gemini Nano XS requires about 100MB of storage, but the Gemini Nano XXS version currently found in the Pixel 9a needs only 25MB and limits itself to text processing with a reduced context recognition capability.

The strategic shift Google has made creates major impacts throughout the Android ecosystem because the ML Kit SDK functions on devices beyond just Google’s Pixel line. Android industry leaders such as OnePlus, Samsung, and Xiaomi are currently developing their next-generation smartphones to support the Gemini Nano model which has already significantly enhanced Pixel devices. Developers will reach an expanded and more diverse group of users because Android-powered smartphones are increasingly adopting Google’s local AI model support.

Android app developers who wish to integrate on-device generative AI capabilities face numerous challenges within the current technology environment. The ability of Google’s experimental AI Edge SDK to enable developers to utilize the dedicated Neural Processing Unit (NPU) for AI model execution remains restricted due to its availability only on the Pixel 9 devices and its concentration on text-based processing tasks, which limits its broad applicability for developers. Tech giants like Qualcomm and MediaTek deploy proprietary API suites to handle AI tasks on their chipsets, but inconsistent feature sets and functionality across various silicon designs and devices make sustained development through these fragmented systems complex and suboptimal for long-term use. The development and seamless execution of custom AI models requires an extensive amount of specialized knowledge that makes the task extremely challenging and often prohibitively complex to execute. These new APIs, founded on the Gemini Nano model, will simplify local AI capabilities implementation, making it both intuitive and accessible for developers across diverse backgrounds and thus spurring innovation in mobile application development.

The Gemini Nano model’s standardized APIs launch marks a crucial move toward mobile experiences enriched with intelligent AI functionalities while improving privacy and efficiency. The computational restrictions of on-device processing require trade-offs when compared to cloud-based systems, yet represent a critical transition to a more localized and potentially more secure framework for AI-driven mobile applications. The success and widespread adoption of this transformative technology depend upon Google’s collaboration with various Original Equipment Manufacturers (OEMs) to support Gemini Nano across diverse Android devices, because some companies might follow different technological directions, and older devices might not possess adequate processing power for local AI execution.